Top LLM Observability Platforms: LangSmith vs Arize vs HoneyHive

LLM observability platforms give you visibility into what happens between a user's prompt and your model's response. This guide compares seven leading platforms across pricing models (per trace vs per seat vs usage-based), tracing depth, evaluation frameworks, and production monitoring. Each entry includes real pricing, honest limitations, and guidance on which teams benefit most.

Why LLM Observability Is Different from Traditional Monitoring

Traditional application monitoring tracks deterministic behavior: request latency, error rates, CPU usage. LLM applications introduce a new problem. The same input can produce different outputs every time, and "wrong" is harder to define than a 500 error.

LLM observability platforms solve three specific problems that standard APM tools cannot:

Trace decomposition. A single agent request might involve a router prompt, three tool calls, a retrieval step, and a final synthesis. When the output is wrong, you need to see which step failed. Traditional monitoring shows you total latency. LLM tracing shows you that retrieval returned irrelevant documents, or that the router picked the wrong tool.

Cost attribution. Token usage varies wildly between requests. A simple question might cost $0.002 while a complex chain costs $0.50. Without per-trace cost tracking, you can't identify which features are burning through your budget or which users are generating expensive queries.

Quality regression detection. When you update a prompt or swap models, you need to know if output quality changed. LLM observability platforms include evaluation frameworks that score outputs against datasets, catching regressions before users notice them.

The platforms in this guide all address these three problems, but they take different approaches to pricing, integration depth, and evaluation sophistication.

How We Evaluated These Platforms

We assessed each platform across five dimensions:

- Integration effort. How many lines of code to get traces flowing? Does it require SDK changes or just a proxy swap?

- Tracing depth. Can it decompose multi-step agent workflows? Does it support nested spans, tool calls, and retrieval steps?

- Evaluation framework. Does it include LLM-as-judge scoring, human annotation queues, regression testing against datasets?

- Pricing model. Per trace, per seat, per event, or usage-based? What does the free tier actually include?

- Production readiness. Alerting, dashboards, retention policies, compliance certifications, and team collaboration features.

Every platform listed here is a real, actively maintained product. We verified pricing and features against official documentation as of March 2026.

1. LangSmith

LangSmith is the observability platform from LangChain, the team behind the most widely adopted LLM framework. It has deep, automatic integration with LangChain and LangGraph, but also works with OpenAI SDK, Anthropic SDK, Vercel AI SDK, and LlamaIndex.

Key strengths:

- Automatic tracing of LangChain chains and agents with zero configuration. Every retriever call, tool invocation, and LLM request is captured as a nested span.

- A mature evaluation framework with multiple evaluator types: LLM-as-judge, heuristic checks, human annotation queues, and pairwise comparisons. You can write custom evaluators in Python or TypeScript.

- Automatic trace clustering that detects usage patterns, common agent behaviors, and failure modes across your production traffic.

Key limitations:

- Heavily optimized for LangChain. If you're using a different framework, you'll get tracing but miss some of the automatic instrumentation.

- Pricing scales with trace volume. The free tier includes only 5,000 base traces per month with 14-day retention. Base traces cost $2.50 per 1,000 and extended traces (400-day retention) cost $5.00 per 1,000.

Best for: Teams building agents with LangChain or LangGraph who want the tightest possible integration between their framework and their observability layer.

Pricing: Free Developer tier (5,000 traces/month, 1 seat). Plus plan adds unlimited seats, 10,000 traces/month included, with overage at $0.50 per 1,000 base traces.

2. Arize AI (Phoenix)

Arize takes a two-product approach. Phoenix is their open-source observability library, free to self-host with no usage limits. Arize AX is the managed cloud platform with additional enterprise features.

Phoenix is built on OpenTelemetry, which means it's vendor-agnostic and framework-agnostic. You can instrument any Python or JavaScript application regardless of which LLM provider or orchestration framework you use.

Key strengths:

- Open-source core with no feature gates on self-hosted deployments. You get full tracing, evaluation, and dataset management without paying anything.

- UMAP embedding visualizations that help debug retrieval quality in RAG pipelines. You can see clusters of similar queries and identify where your retriever returns irrelevant documents.

- Built-in LLM-as-judge evaluation with both offline (against datasets) and online (against production traffic) scoring.

Key limitations:

- The enterprise platform has a steeper learning curve than proxy-based tools like Helicone. Expect a few hours to get comfortable with the evaluation workflows.

- The managed cloud free tier is limited to 25,000 spans and 1 GB ingestion per month with only 7-day retention.

Best for: Teams that want open-source observability they can self-host, or enterprise teams that need deep embedding analysis and drift detection.

Pricing: Phoenix is free (open source, self-hosted). AX Free: 25,000 spans/month, 7-day retention. AX Pro: $50/month, 15-day retention. AX Enterprise: custom pricing with SOC 2, HIPAA, and configurable retention.

Give Your Agents Persistent Storage Alongside Observability

Trace execution with LangSmith or Arize, store artifacts with Fast.io. The free agent tier includes 50 GB storage, 5,000 credits, and MCP server access. No credit card required.

3. Langfuse

Langfuse is an open-source LLM engineering platform that has grown rapidly since its Y Combinator W23 batch. It covers observability, prompt management, evaluation, and cost tracking in a single tool, with native SDKs for Python, JavaScript, Java, and Go.

What sets Langfuse apart is its combination of open-source self-hosting and a polished cloud product with unlimited users on every paid tier. Most competitors charge per seat, which makes Langfuse significantly cheaper for larger teams.

Key strengths:

- Unlimited users on all paid plans. The $29/month Core plan supports your entire team, not just one developer.

- Complete prompt management with versioning, a playground for testing, and the ability to run experiments comparing prompt variants against datasets.

- MIT-licensed open-source core. Self-host on your own infrastructure with no licensing fees and no feature restrictions.

Key limitations:

- The free Hobby tier is limited to 50,000 observation units per month with 30-day retention and only 2 users.

- SOC 2 and HIPAA compliance are only available on the Pro tier ($199/month) and above.

Best for: Teams that want an all-in-one platform (tracing, prompts, evals) without per-seat pricing. Particularly strong for teams that want to self-host.

Pricing: Hobby: free (50k units/month, 2 users). Core: $29/month (100k units, unlimited users). Pro: $199/month (3-year retention, compliance). Enterprise: $2,499/month. Overage: $8 per 100k units on all paid tiers.

4. HoneyHive

HoneyHive is an OpenTelemetry-native platform focused on production safety and continuous improvement. While other tools emphasize development-time debugging, HoneyHive puts extra weight on what happens after deployment: automated alerts, human review queues, and feedback loops that route failing outputs back into training data.

Key strengths:

- Production automation rules that trigger when cost spikes, safety violations, or quality drops are detected. Failing outputs can be automatically routed to human review queues.

- Session replay with graph and timeline views for debugging multi-agent interactions. You can trace how agents coordinate across multiple steps and tool calls.

- Native framework support for LangChain, CrewAI, Google ADK, AWS Strands, and others.

Key limitations:

- Enterprise pricing starts around $50,000 per year, which puts the full platform out of reach for smaller teams.

- Smaller community and fewer tutorials compared to LangSmith or Langfuse. You'll rely more on official docs than Stack Overflow answers.

Best for: Teams running LLM applications in production where safety, compliance, and continuous quality improvement are priorities.

Pricing: Free tier with 10,000 events/month. Custom enterprise pricing for higher volumes and deployment options.

5. Helicone

Helicone takes the simplest possible approach to LLM observability: it's a proxy. Change your API base URL to point through Helicone, and every request to OpenAI, Anthropic, Google, or any of 100+ supported providers gets logged automatically. No SDK changes, no decorator functions, no framework-specific instrumentation.

This simplicity comes with a tradeoff. Helicone captures request-level data (input, output, latency, tokens, cost) but doesn't decompose multi-step agent workflows into nested spans the way LangSmith or Arize do.

Key strengths:

- One-line integration. Change your base URL and you're logging. Works with any language, any framework, any provider.

- Built-in AI Gateway with intelligent routing that automatically selects the cheapest available provider for equivalent models. The Model Registry shows real-time pricing across providers.

- Prompt versioning and deployment through the gateway, so you can update prompts without code changes.

Key limitations:

- Less suited for debugging complex agent logic with multiple internal steps. It sees API calls, not the orchestration between them.

- Free tier is limited to 10,000 requests per month with 7-day retention.

Best for: Developers who want instant observability and cost tracking without touching their application code. Great as a first observability layer.

Pricing: Free: 10,000 requests/month, 7-day retention. Pro: $79/month (unlimited seats, 1-month retention). Team: $799/month with extended features.

6. Braintrust

Braintrust positions itself as the end-to-end AI quality platform, combining tracing, evaluation, and an AI assistant called Loop that analyzes your logs and suggests improvements. It recently raised $80M at an $800M valuation (February 2026), which signals strong enterprise traction.

The standout feature is the free tier: 1 million trace spans for unlimited users. That's orders of magnitude more generous than LangSmith's 5,000 traces or Langfuse's 50,000 units.

Key strengths:

- 1 million free trace spans with unlimited users. The most generous free tier of any platform on this list.

- 25+ built-in scoring functions for accuracy, relevance, safety, and custom criteria. Loop, the AI assistant, can generate custom scorers from natural language descriptions.

- Native SDK support for 13+ frameworks: LangChain, LlamaIndex, Vercel AI SDK, OpenAI Agents SDK, Pydantic AI, DSPy, Google ADK, CrewAI, and more.

Key limitations:

- The Pro plan jumps to $249/month, which is steep if you need features beyond the free tier.

- Newer platform with less battle-tested production deployment history than Arize or W&B.

Best for: Teams that want generous free tracing with strong built-in evaluation, especially if you're using multiple frameworks.

Pricing: Free: 1M spans, 10k scores, unlimited users. Pro: $249/month with unlimited spans and scores.

7. Weights & Biases (Weave)

Weights & Biases has been the default experiment tracking platform for ML teams for years. Weave is their LLM-specific observability product, extending W&B's tracking approach to generative AI workloads.

If your team already uses W&B for model training, fine-tuning, or traditional ML, Weave integrates directly into your existing workflows. You get LLM tracing alongside your training runs, hyperparameter sweeps, and model registry in one dashboard.

Key strengths:

- Tight integration with the broader W&B ecosystem. If you're fine-tuning models, you can trace both training and inference in the same platform.

- Automatic logging of all inputs, outputs, code, and metadata at a granular level. Traces are organized to show the full execution flow of your LLM calls.

- Python and TypeScript SDKs with framework integrations including Google ADK and Vercel AI SDK.

Key limitations:

- Primarily designed for teams already in the W&B ecosystem. If you're not training models, the broader platform adds complexity you don't need.

- LLM-specific features are newer and less mature than Weave's traditional ML tracking capabilities.

Best for: ML engineering teams that train or fine-tune their own models and want LLM observability in the same platform they already use.

Pricing: Free for personal use with included credits. Team and Enterprise plans available with per-seat pricing.

How to Store and Share Agent Artifacts

Observability platforms trace what your agent does. But agents also produce artifacts: generated reports, processed documents, datasets, evaluation results. Those artifacts need somewhere to live, and they need to be accessible to both the agent that created them and the humans who review them.

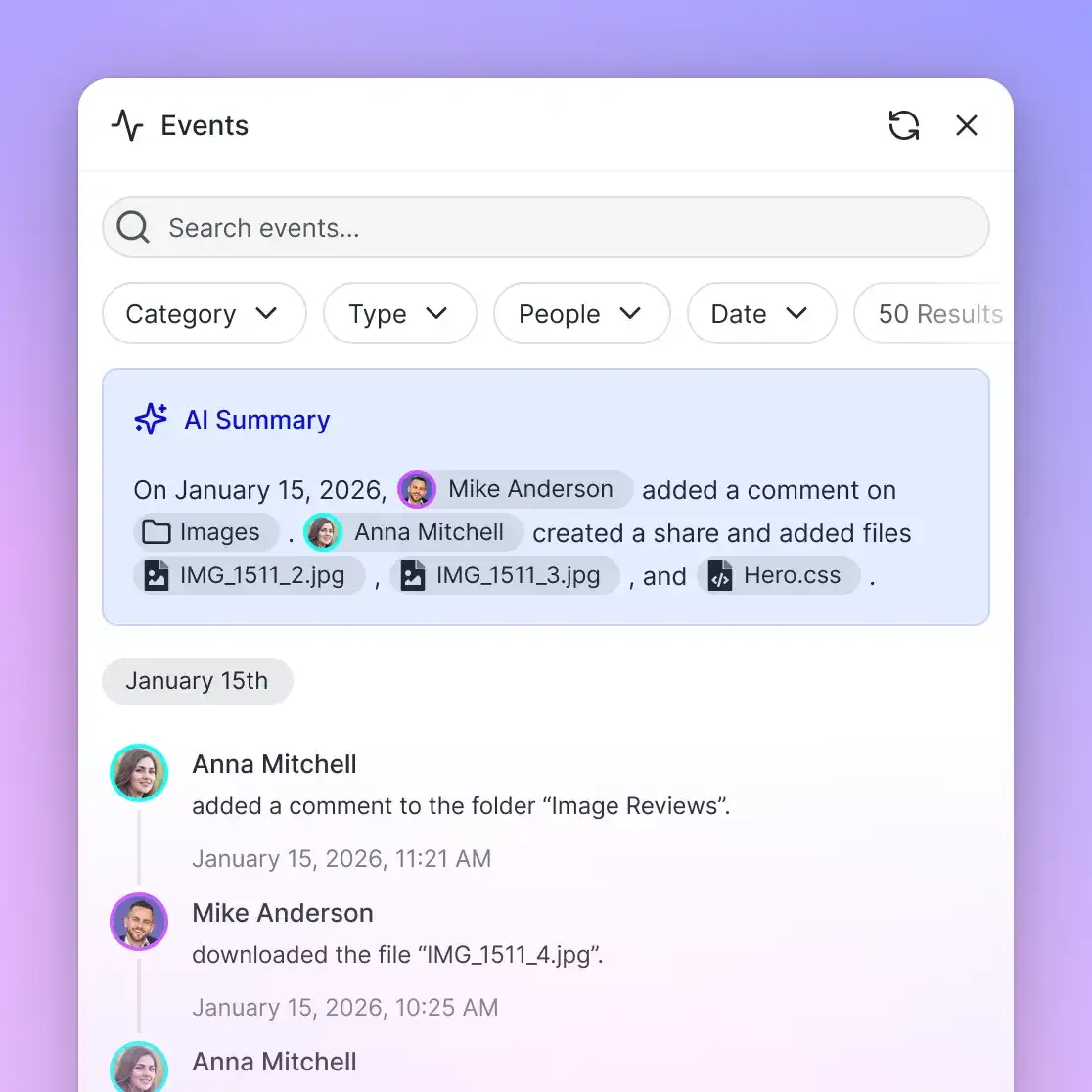

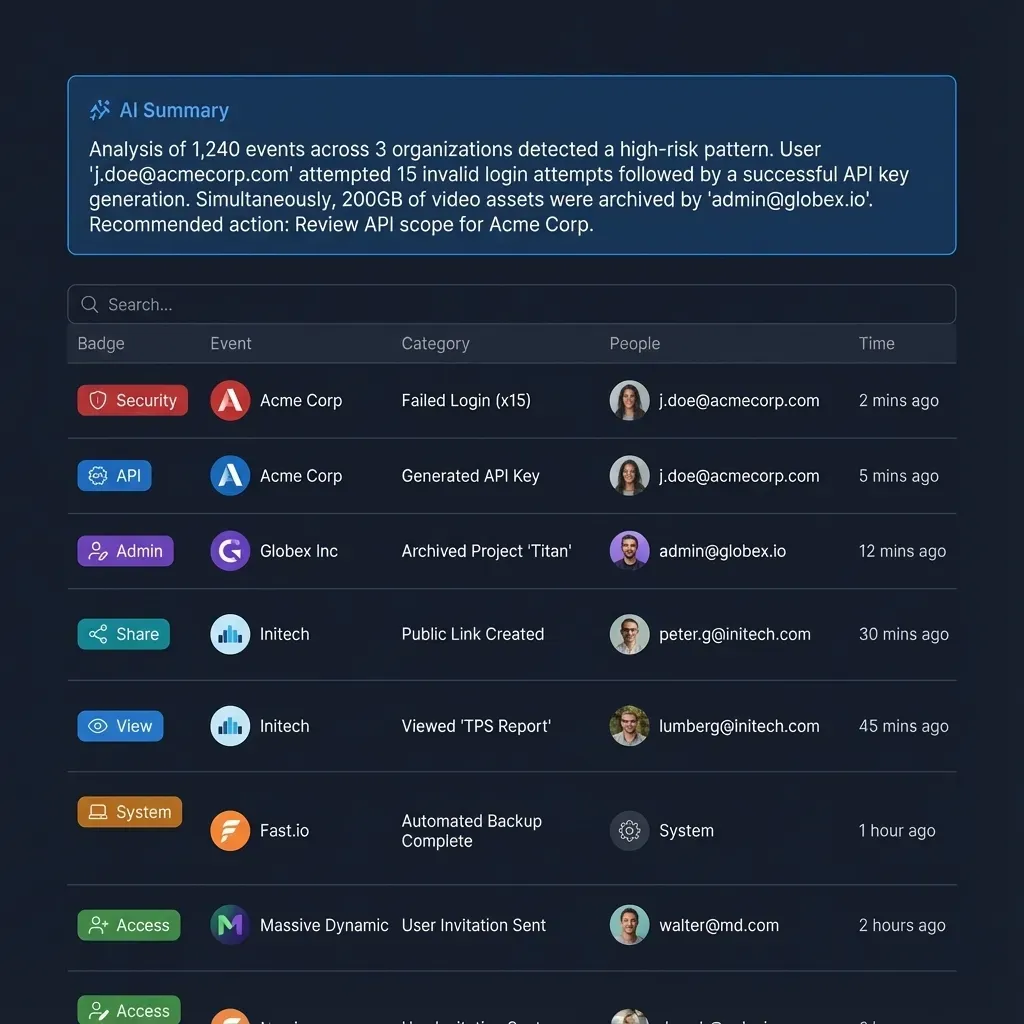

Fast.io fills this gap as an intelligent workspace for agentic teams. While LangSmith traces your agent's LLM calls, Fast.io stores the files your agent produces and makes them immediately available for human review.

What this looks like in practice:

- An agent runs a daily evaluation pipeline, scores 500 outputs, and writes the results to a Fast.io workspace. A human reviewer opens the workspace and sees the report without downloading anything.

- A content generation agent writes draft articles, uploads them to a shared workspace, and the editorial team reviews and approves them through the same interface.

- An agent processes customer data, generates a summary report, and uses ownership transfer to hand the entire workspace to a client.

Fast.io's MCP server gives agents native access to workspace, storage, and AI operations. Enable Intelligence Mode on a workspace and uploaded files are automatically indexed for semantic search, so agents or humans can ask questions about the contents with cited answers.

The free agent tier includes 50 GB of storage, 5,000 monthly credits, and 5 workspaces with no credit card required. It pairs naturally with any observability platform on this list: trace your agent's execution with LangSmith or Arize, store and share the artifacts with Fast.io.

Which Platform Should You Choose?

The right platform depends on three things: your framework, your team size, and whether you need evaluation or just monitoring.

Start with Helicone if you want observability running in five minutes. Change your API base URL and you're logging. It won't trace multi-step agent logic, but it gives you immediate visibility into costs and latency. Good enough for prototyping and early production.

Choose LangSmith if you're building with LangChain or LangGraph. The automatic instrumentation is unmatched, and the evaluation framework is mature. Watch the trace volume costs if you're handling high traffic.

Choose Langfuse if per-seat pricing is a concern. Unlimited users on the $29/month plan makes it the most affordable option for teams with more than a few developers. The open-source self-hosting option adds flexibility.

Choose Arize Phoenix if you want to self-host everything or need deep embedding analysis for RAG debugging. The open-source core has no usage limits, and the managed platform adds enterprise compliance.

Choose Braintrust if you want the most generous free tier and strong built-in evaluation. One million free spans covers a lot of development and testing before you need to upgrade.

Choose HoneyHive if production safety is your priority. The automated alerting and human review routing are designed for teams that can't afford quality regressions.

Choose W&B Weave if your team already lives in the Weights & Biases ecosystem for model training and wants LLM observability in the same dashboard.

For many production applications, a combination works best. Use a tracing platform (LangSmith, Langfuse, or Braintrust) for execution visibility, add Helicone as a cost-control gateway, and use Fast.io for persistent artifact storage and human handoff.

Frequently Asked Questions

What is the best tool for LLM tracing?

It depends on your framework. LangSmith is the strongest choice for LangChain and LangGraph users because of its automatic instrumentation. For framework-agnostic tracing, Arize Phoenix and Langfuse both use OpenTelemetry and work with any LLM provider. If you want the fastest setup, Helicone's proxy approach requires only a URL change.

Is LangSmith free?

LangSmith has a free Developer tier that includes 5,000 base traces per month with 14-day retention and one seat. This is enough for individual developers prototyping an application, but teams will quickly need the Plus plan for additional seats and trace volume.

What is the difference between per-trace and per-seat pricing?

Per-trace pricing (LangSmith, Helicone) charges based on how many LLM calls you log. Costs scale with traffic volume. Per-seat pricing (W&B) charges based on team size. Usage-based pricing (Langfuse, Arize) combines elements of both, charging per observation unit or span. For high-traffic applications with small teams, per-seat is cheaper. For large teams with moderate traffic, per-trace or usage-based is cheaper.

Can I use these tools with local models like Llama?

Yes. Most platforms support any model you can call via an API, including local models running on Ollama, vLLM, or similar serving frameworks. Arize Phoenix is particularly well-suited for local models because the open-source version runs entirely on your own infrastructure with no data leaving your network.

Do I need a separate tool for prompt management?

Not necessarily. LangSmith, Langfuse, and Helicone all include prompt versioning and management alongside their observability features. Langfuse adds a playground for testing prompt variants. If you only need prompt management without tracing, a dedicated tool like PromptLayer might be simpler, but most teams benefit from having prompts and traces in the same platform.

How do observability platforms handle sensitive data?

Most platforms offer data masking or PII redaction. Arize Phoenix and Langfuse can be self-hosted to keep all data on your infrastructure. Langfuse Pro includes HIPAA and SOC 2 compliance. Arize AX Enterprise offers SOC 2 and HIPAA with configurable data residency. For the most sensitive workloads, self-hosting Phoenix or Langfuse is the safest option.

Related Resources

Give Your Agents Persistent Storage Alongside Observability

Trace execution with LangSmith or Arize, store artifacts with Fast.io. The free agent tier includes 50 GB storage, 5,000 credits, and MCP server access. No credit card required.