How to Extract Metadata in Real Time on File Upload

Real-time metadata extraction on file upload parses file properties the moment a file is received, making metadata available for search, validation, and routing before the user leaves the upload screen. This guide covers the architecture, implementation patterns, and tooling for building extraction into your upload flow, including partial parsing for large files and AI-powered structured extraction.

Why Metadata Should Be Ready Before the Upload Screen Closes

Most file management systems treat metadata extraction as an afterthought. A file lands in storage, a background job picks it up minutes later, and eventually the metadata shows up in a search index or database. That gap between upload and availability creates real problems.

Consider a legal team uploading contracts for review. If the system can't immediately surface the contract date, counterparty name, and document type, someone has to manually tag each file or wait for batch processing to finish. During that wait, the files sit in a folder with no context, invisible to search, and impossible to route automatically.

Users expect upload feedback within 2 seconds for files under 50 MB. That expectation sets the performance budget for synchronous extraction. If your metadata pipeline can return basic file properties (dimensions, page count, author, creation date, MIME type) within that window, users see the result before they navigate away. The file feels "processed" immediately.

This matters for three reasons:

- Validation happens at upload time. You can reject files that don't meet requirements (wrong format, missing fields, too low resolution) before they enter your system. Catching problems early is cheaper than cleaning them up downstream.

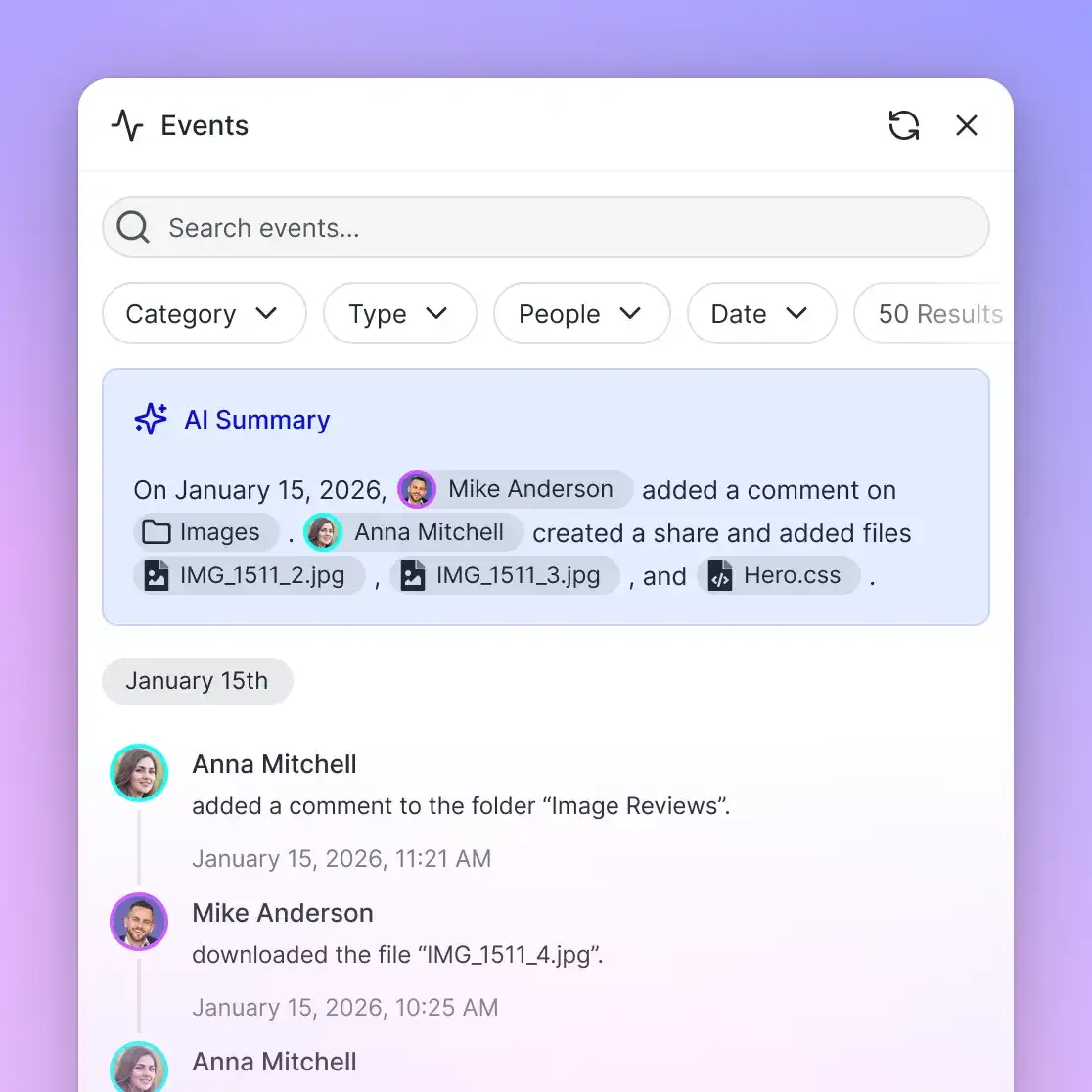

- Routing becomes automatic. Metadata like document type, department tags, or project codes can drive where the file goes next. An invoice routes to accounts payable. A contract routes to legal review. No manual sorting required.

- Search works immediately. If metadata is indexed at upload time, the file is discoverable the moment the upload completes. No waiting for a nightly indexing job.

The alternative, batch processing after upload, works fine for archives and bulk imports where nobody is watching. But for interactive workflows where people upload files and expect to work with them right away, the delay is a UX problem that compounds across every file and every user.

How Upload-Time Extraction Works

Upload-time extraction follows a pipeline that runs inline with the HTTP request. The upload arrives, the server inspects the file, and the response includes extracted metadata alongside the upload confirmation. The core stages look like this:

- Receive the upload stream. The server accepts the file via multipart form data or a chunked upload protocol like tus. As bytes arrive, the server writes them to temporary storage or streams them directly to the extractor.

- Detect the file format. Before parsing, the server reads the first few bytes (the "magic bytes" or file signature) to determine the actual format. Don't rely on the file extension or the client-provided MIME type. A file named

report.pdfmight actually be a ZIP archive. Libraries likefile-type(Node.js) orpython-magic(Python) handle this detection reliably. - Run a lightweight extractor. Based on the detected format, the server invokes a format-specific parser that reads only the metadata fields, not the full file contents. For images, this means reading EXIF headers. For PDFs, it means parsing the document info dictionary. For Office documents, it means reading the core properties XML from the ZIP container.

- Return metadata in the response. The extracted metadata goes back to the client as part of the upload response, typically as JSON alongside the file ID and storage URL. The user sees a preview of what the system knows about their file.

- Queue deep extraction. For computationally expensive operations (OCR, AI classification, full-text indexing), the server enqueues an asynchronous job. The user gets basic metadata instantly, and richer metadata appears as the background job completes.

This two-phase approach (synchronous lightweight extraction followed by asynchronous deep extraction) gives you the best of both worlds. Users get immediate feedback, and your system gets the thorough analysis it needs without blocking the upload response.

Building a Lightweight Extraction Pipeline

The key constraint for synchronous extraction is time. Adding 100 to 500 milliseconds per file is acceptable for most upload flows. Beyond that, users start noticing the delay. That budget shapes which tools and approaches work.

Format Detection

Start with magic byte detection. The first 4 to 12 bytes of most file formats contain a signature that identifies the type unambiguously:

import { fileTypeFromBuffer } from "file-type";

async function detectFormat(buffer) {

const type = await fileTypeFromBuffer(buffer);

return type ? type.mime : "application/octet-stream";

}

This runs in under a millisecond and protects you from trusting user-supplied MIME types, which can be spoofed or simply wrong.

Header-Only Parsing

Most metadata lives in file headers, not in the body. A 500 MB video file might have all its resolution, codec, duration, and creation date information in the first 64 KB. Reading the entire file to get this data wastes time and memory.

WeTransfer's open-source format_parser library demonstrates this approach well. It reads the minimum number of bytes needed to extract metadata, fetching from the beginning of the file first and only seeking to the end or middle when the format requires it. The design principle is straightforward: read as little as possible, as cheaply as possible.

For images, EXIF data sits in a defined header block. Libraries like exif-reader (Node.js) or Pillow (Python) can parse it from a buffer without loading the full image into memory. For PDFs, the document info dictionary is typically near the start of the file, and libraries like pdf-parse can extract title, author, creation date, and page count from a partial read.

Choosing Your Extractor Stack

The right tools depend on which formats you handle most often:

- Images (JPEG, PNG, TIFF, HEIC):

sharporexif-readerfor Node.js,Pillowfor Python. Extract dimensions, color space, DPI, camera model, GPS coordinates, and creation date. - PDFs:

pdf-parsefor Node.js,PyPDF2orpdfminerfor Python. Extract page count, author, title, creation and modification dates, and whether the document is encrypted. - Office documents (DOCX, XLSX, PPTX): These are ZIP containers with XML metadata. Parse

docProps/core.xmldirectly for author, title, created date, and modification date without decompressing the full archive. - Video and audio:

ffprobe(from FFmpeg) extracts codec, duration, resolution, bitrate, and container metadata. For sync extraction, limit the probe to metadata streams only with-show_format -show_streams.

Apache Tika covers all of these formats through a single interface, which simplifies your code if you handle diverse file types. The tradeoff is that Tika is a Java-based server process, so it adds operational complexity compared to native libraries.

Handling Large Files with Partial Parsing

Files over 50 MB present a specific challenge. The upload itself takes seconds or minutes, and blocking the response until the full file is received and parsed defeats the purpose of real-time extraction.

Three patterns handle this well:

Stream-Based Extraction

Instead of waiting for the full upload to complete, start extracting metadata from the byte stream as it arrives. Multipart upload parsers emit chunks sequentially, and many file formats store metadata headers at the beginning of the stream. You can extract EXIF data from a JPEG after receiving the first few kilobytes, well before the full image has landed.

This requires a parser that can work with incomplete data. Not all libraries support streaming input, so check whether your extractor can accept a readable stream or a partial buffer. For formats that store metadata at the end of the file (like some MP4 containers that put the moov atom last), streaming extraction won't work and you'll need to fall back to async processing.

Chunked Upload with Early Extraction

Protocols like tus split large uploads into chunks. After the first chunk arrives (typically 1 to 5 MB), you can run extraction on that initial segment while the remaining chunks continue uploading in parallel. The metadata response goes back to the client immediately, and the upload continues in the background.

This is the most practical approach for files over 100 MB. The first chunk contains enough data for format detection and header parsing, and the upload doesn't stall waiting for extraction to finish.

Range-Based Reads from Object Storage

If files land in object storage (S3, GCS, R2) before extraction runs, use HTTP Range requests to fetch only the bytes you need. Request the first 64 KB for header metadata, and if the format requires a trailer (like some TIFF files), request the last 8 KB separately. Two small HTTP requests are far cheaper than downloading a multi-gigabyte file.

The common thread across all three patterns is the same: read the minimum necessary bytes. A well-tuned extraction pipeline should process the metadata portion of any file in under 500 milliseconds, regardless of total file size.

Extract structured metadata from every upload

Fast.io Metadata Views use AI to parse documents, images, and scanned files into structured, queryable data. 50 GB free, no credit card required.

Choosing Between Synchronous and Asynchronous Extraction

Not every metadata field needs to be extracted synchronously. The decision comes down to what the user or system needs immediately versus what can wait.

Extract synchronously (during upload, within the response):

- File type and MIME detection

- Dimensions, page count, duration

- Author, title, creation date

- Basic validation checks (is this actually a PDF? Does the image meet minimum resolution?)

These fields are fast to extract (typically under 200 ms) and are needed for the upload confirmation screen, file previews, and immediate routing decisions.

Extract asynchronously (after upload, via background job or webhook):

- Full-text content extraction and indexing

- OCR for scanned documents and images

- AI-powered classification and entity extraction

- Thumbnail and preview generation

- Virus scanning and content moderation

These operations take seconds to minutes and shouldn't block the upload response. Queue them as background jobs triggered by the upload event.

The boundary between sync and async should be driven by your latency budget. If your upload endpoint needs to respond in under 2 seconds and extraction adds 300 ms, you have room for a few lightweight parsers. If you're running OCR or sending the file to an AI model, that's a background job.

For webhook-triggered async extraction, tools like Fast.io webhooks can fire events on file upload, letting your extraction service react immediately without polling. That approach is covered in detail in a companion guide on webhook-based metadata extraction workflows.

The two patterns complement each other. Synchronous extraction gives users instant feedback. Asynchronous extraction builds the deep, structured metadata that powers search, compliance, and automation over time.

Structured Extraction with Fast.io Metadata Views

Building and maintaining format-specific parsers works when you control the pipeline and know exactly which fields you need. But many teams need to extract different fields from different document types, and the schema changes as business requirements evolve. Writing custom parsers for every new field is slow and brittle.

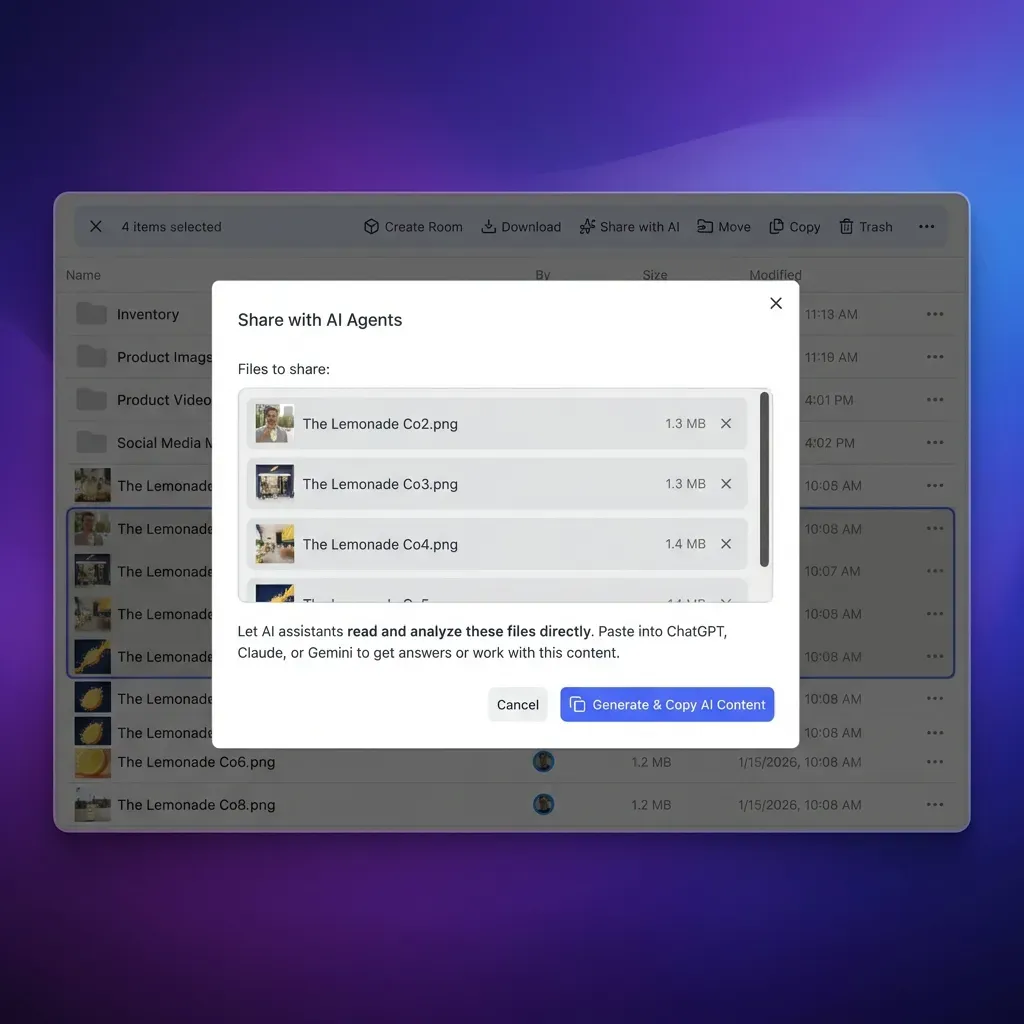

Metadata Views in Fast.io take a different approach. Instead of writing extraction rules, you describe the fields you want in natural language. The system uses AI to design a typed schema (supporting Text, Integer, Decimal, Boolean, URL, JSON, and Date/Time types) and then extracts those fields from every matching file in your workspace.

Here's what that looks like in practice. Say your team uploads insurance policy documents and needs to extract the policy number, coverage limit, effective date, and insured party name. Instead of building a custom PDF parser that looks for those fields in specific locations, you create a Metadata View and describe each column. The AI figures out where those values appear across different document layouts and populates a sortable, filterable spreadsheet.

This works across PDFs, images, Word documents, spreadsheets, presentations, scanned pages, and even handwritten notes. When your requirements change (say you need to also extract the deductible amount), you add a new column. Existing files are re-analyzed without reprocessing the entire pipeline.

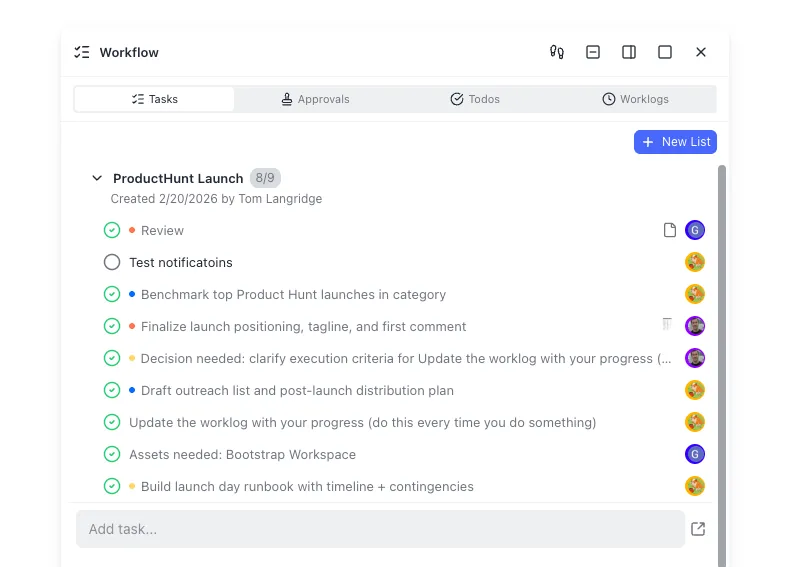

For agent-driven workflows, Metadata Views are accessible through Fast.io's MCP server. An agent can create a View, trigger extraction on uploaded files, and query the results programmatically. That means an AI agent handling document intake can upload a batch of contracts, define the fields it needs (counterparty, execution date, governing law), and query the structured results, all through the same workspace where the files live.

The combination of upload-time basic extraction and AI-powered structured extraction covers both sides of the metadata problem. Users get immediate feedback on file properties at upload time. The deeper, business-specific metadata follows within seconds as the AI extraction completes. Both layers feed into the same search index, so files are discoverable by any metadata field, whether it came from a header parser or an AI model.

Fast.io's free agent plan includes 50 GB of storage and 5,000 credits per month, which covers Metadata Views extraction, Intelligence Mode search, and MCP access. No credit card required.

Frequently Asked Questions

How do you extract metadata during file upload?

Read the file's header bytes as part of the upload handler, before writing the response. Use format-specific libraries (like exif-reader for images or pdf-parse for PDFs) to parse metadata from the first few kilobytes. Return the extracted fields in the upload response so the user sees them immediately. Queue deeper extraction (OCR, AI classification) as a background job.

Can you parse metadata without downloading the full file?

Yes. Most file formats store metadata in headers near the beginning of the file. You can use HTTP Range requests to fetch just the first 64 KB from object storage, or process the initial chunk of a streaming upload. Some formats (like certain MP4 containers) store metadata at the end, which requires either a second range request for the trailer or deferring to async extraction.

What is the fastest way to extract file metadata on upload?

Magic byte detection for format identification runs in under a millisecond. Header-only parsing for EXIF, PDF properties, or Office document metadata typically completes in 50 to 200 milliseconds. The fastest approach combines format detection from the first 12 bytes with a targeted header parser that reads only the metadata block, skipping the file body entirely.

How does real-time metadata extraction improve file management?

It makes files searchable and routable the moment they arrive. Instead of waiting for batch processing, you can validate files at upload time (rejecting wrong formats or missing fields), automatically route documents to the right team or workflow, and let users search for newly uploaded files immediately. The result is fewer manual tagging steps and faster time to action.

What file types support real-time metadata extraction?

Images (JPEG, PNG, TIFF, HEIC) store EXIF and XMP metadata in headers. PDFs include a document info dictionary with author, title, and dates. Office documents (DOCX, XLSX, PPTX) are ZIP archives with XML metadata files. Video and audio containers (MP4, MOV, MP3) include codec, duration, and resolution metadata. Apache Tika provides a single interface for parsing metadata across more than a thousand formats.

Related Resources

Extract structured metadata from every upload

Fast.io Metadata Views use AI to parse documents, images, and scanned files into structured, queryable data. 50 GB free, no credit card required.